Nakivo marks another important milestone: during VMworld 2017, the emergent company situated in Silicon Vally, goes out with the version 7.2, which near some usability feature, improves the ability to protect MS SQL environment elements enabling:

- Transaction Log Truncation for Microsoft SQL Server

- Instant Object Recovery for Microsoft SQL Server

The integration with ASUSTOR NAS is another interesting feature came out with 7.2: the growing of the integration with more NAS systems is another focus of Nakivo developments which aims to increase the support for mid-range storage giving an embedded solution to protect your data.

The other features regard the new Calendar Dashboard and the possibility to create a more flexible backup job scheduler.

If you want to give a try, check here: https://www.nakivo.com/resources/download/trial-download/

The protection into the cloud

In many scenario the cloud adoption is driven by the need to scale out local resources that could impact the service in term of availability and performances. Even if you’re in on-premise or in cloud, the need to protect your data is mission critical for every deployment: in fact the cloud adoption is the new focus for newest availability softwares: they use backup process to protect and recover data and workload across multiple platforms and multiple data centers. But where to store the backup data in all of these situations?

There are three minimum requirements to respect for realize a true data protection:

- Store backup out of the box

- Store backup in a different media

- Store backup out of the data center

Realizing the protection “out of the data center” using public cloud, is the way where more and more disaster recovery projects are landing to reduce the cost, or better to reduce the TCO and pay as you use, without wasting money and resources.

Nakivo is working a lot in this field, building an interesting cross region protection with Amazon EC2. But this is not the only way: you could use Nakivo in AWS to protect your vSphere Virtual machines. Let’s see in depth.

Hybrid cloud with Nakivo

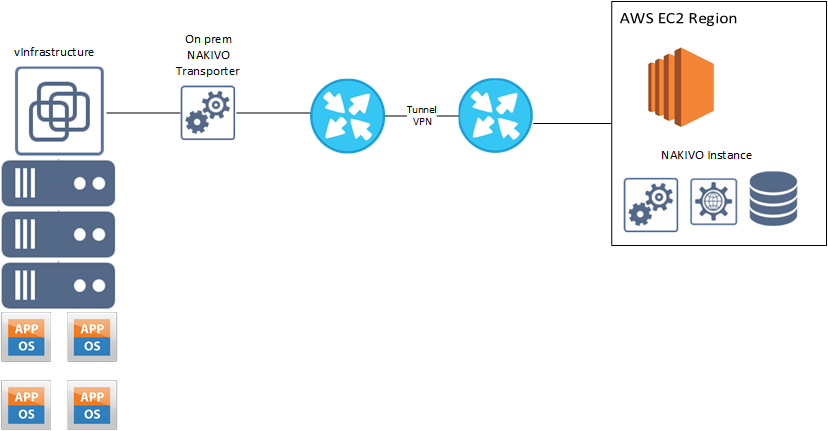

On my previous post ( My Hybrid environment with AWS and pfSense ) I realized a lab using AWS and pfSense to realize an Hybrid Cloud that simply use network VPN to create a secure cloud environment. But what I kept hidden the reason why realize this lab: test Nakivo in hybrid environment to build an off-site protection.

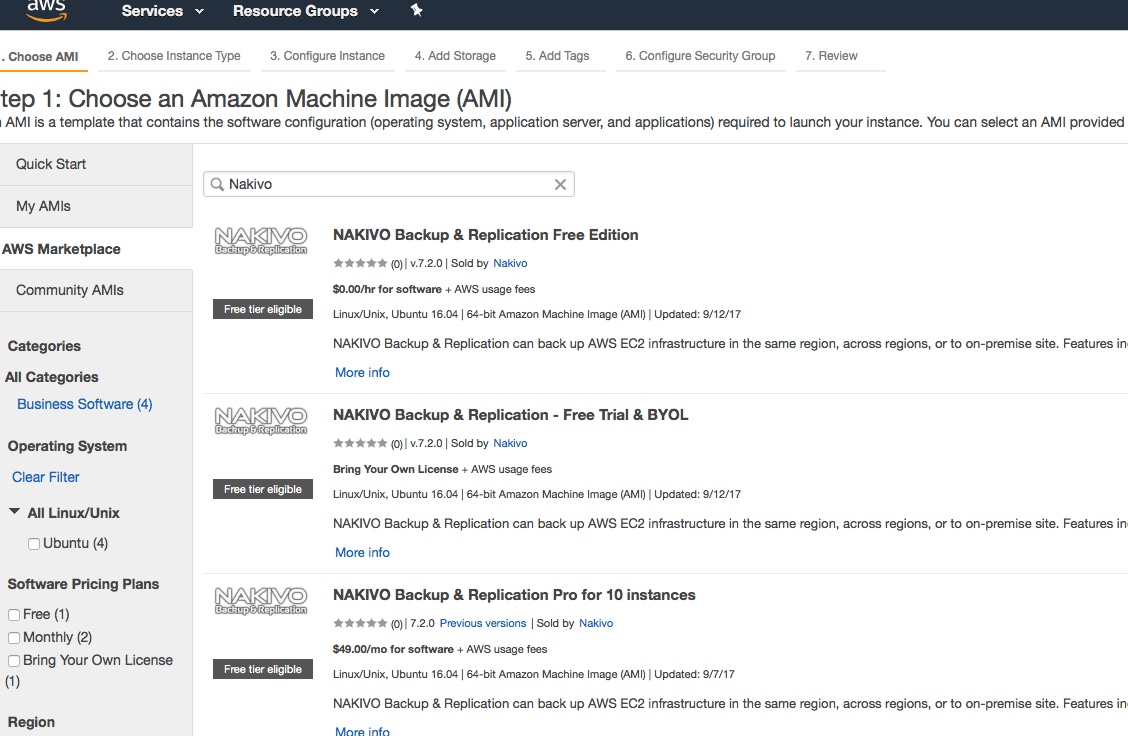

In AWS simply start an EC2 container from Nakivo AMI, which is available in the marketplace:

Note: admin default password in Nakivo is automatically generated using the AWS Instance ID; feel free to change this password (or better I suggest to do it now, security is never too much)

It’s important to gain visibility routing packet from Nakivo instance in AWS to vCenter, ESXi hosts and Transporters. If you’re following my lab architecture, as I wrote on my post, don’t forget to configure routing and disable source/dest check.

Now it’s time to configure Inventory, Transporters and Repository. Before go ahead, I suggest to verify the connectivity between Transporter and vCenter using ssh (in AWS use ssh keypair).

- Add vCenter to the inventory (note if you have a local DNS I suggest to add it in resolv.conf Nakivo EC2 instance or better deploy a local DNS instance to relay the local DNS configuration in AWS)

- Configure Local (127.0.0.1) Transporter

- Deploy Remote Transporter in two ways:

- Deploy a Nakivo Transporter only instance in on-premise infrastructure (a simple VM with 2 vCPU and 2Gb RAM)

- Use existing local Nakivo VM (my preferred)

- Configure Remote Transporter

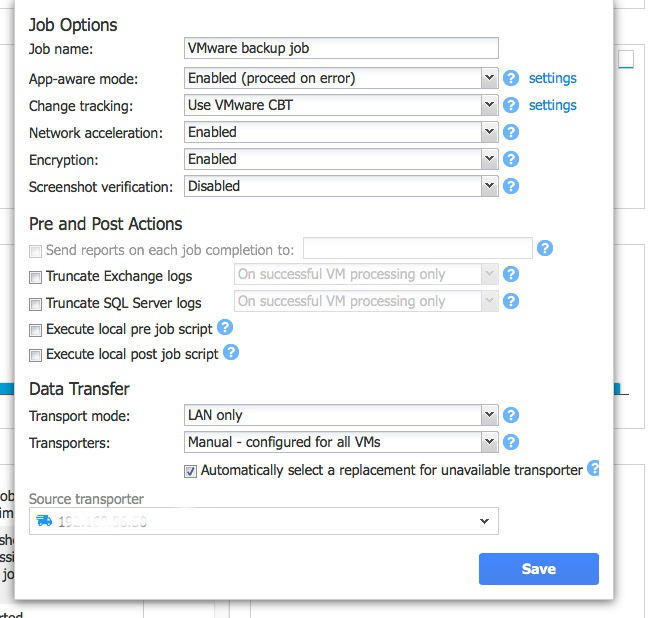

Configure the Job in this scenario looks like the steps used to configure Nakivo in on-premise environment; the only thing to pay attention is the choice of on-premise Transporter as local Transporter. I suggest also to use Network acceleration and encryption to ensure performance and security.

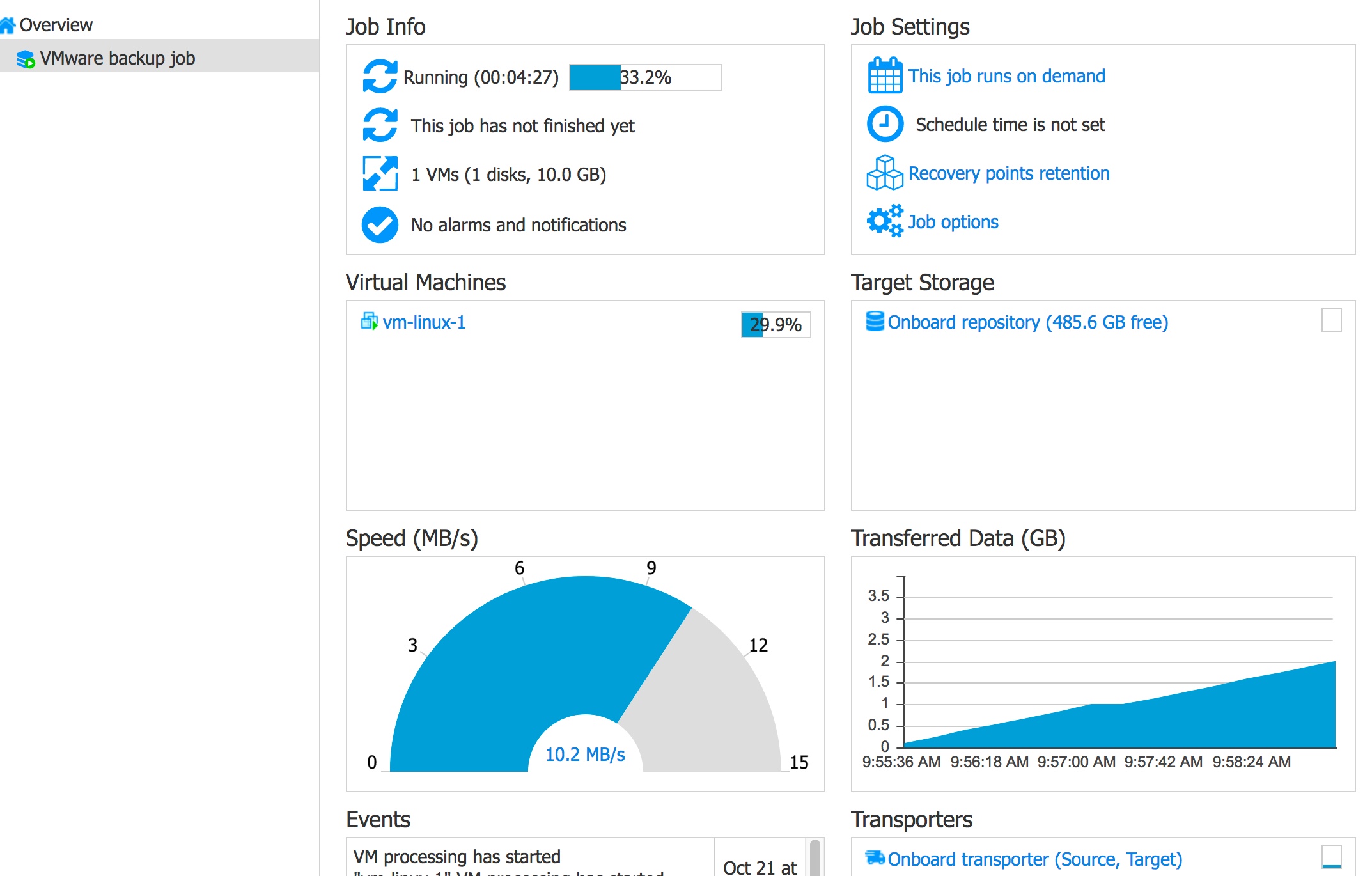

The first backup process could take long time than expected (in my lab with a poor connectivity took about 2hrs per 10GB). For this reason I suggest to proceed with small amount of virtual machine per single job. Then you could start the desired scheduling.

Next backup are very fast and doesn’t consume inter-region AWS bandwidth.

Next steps

This is the demonstration how could be possible and simple respect the 3 fundamentals rules of the backup: off box, in a different media and different data center (in cloud)

In my next Nakivo post, I will show how to integrate this stretched environment using REST-Api and PowerCLI.

Disclaimer

This post is sponsored by Nakivo. Thoughts and experiences around Nakivo product come from my own.