The file exchange and governance is still an hot-topic during a lot of conversation with every sized company. In fact, the way to share, modify and keep under control data across multiple environments, on-the-road and at the same time keeping good security and control of what is holding and sharing.

During Cloud Field Day 6 I had the pleasure to meet Peter Thompson (CEO and co-founder), George Dochev (CTO and co-founder) and Johan Huttenga (Solution architect).

About LucidLink… during Cloud Field Day 6

LucidLink is a software company located in San Francisco CA (HQ) and in Sofia EU (DEV and TEST divisions). The idea around this product is to use the simplicity of the HTTPS to transfer data and meta-data. But in order to reduce latency and speed, only metadata are synced locally: the file content is going to be streamed if requested. Then encryption and deduplication are operating at the “client” level to reduce the effort, increase the security and save capacity and bandwidth.

The conjunction of these features is the “secret sauce” of great usability across distant branches. Also, the wide integration with the S3 storage (owned or cloud provided) opens this product to many developments using object storage data.

For furthers about technology, check the Cloud Field Day recording here:

[vsw id=”Oyjs9ZOy7z8″ source=”youtube” width=”425″ height=”344″ autoplay=”no”]

The test…

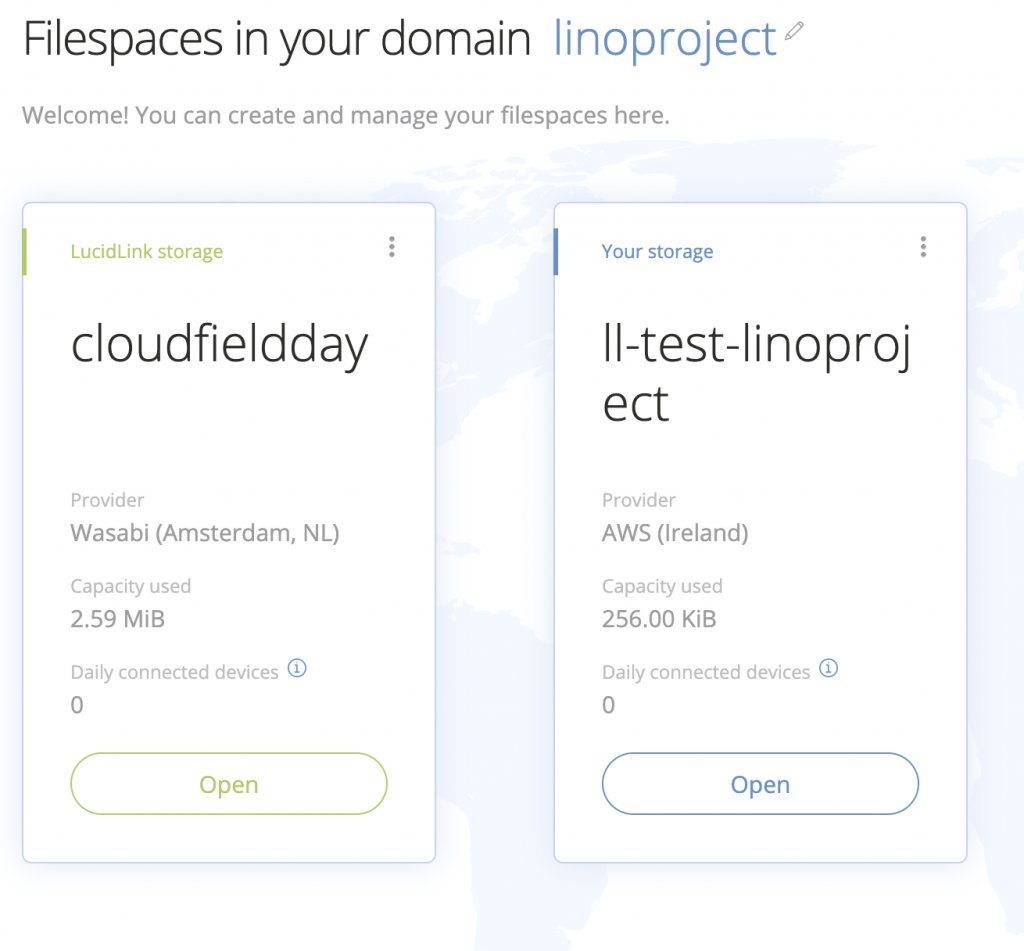

After a quick registration using the “Getting started menu” on the official site, I’m ready to begin using the cloud platform. Then after creating my domain, it’s possible to create the filespace using LucidLink storage (in the cloud) or bringing your own Storage.

After downloading the client (available for all desktop platforms) it is possible to initialize the filespace. Then simply create users and using the client application it’s possible to copy and share files.

Trying something different, LucidLink can handle S3 storage like AWS Bucket, S3, Scality and more… For this next test, I’m gonna use my dev account on AWS with an S3 bucket. Here my cloud formation file:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 |

--- AWSTemplateFormatVersion: '2010-09-09' Description: Create S3 bucket and prepare for Lucidlink Parameters: LLFileSpaceName: Default: ll-test-linoproject Description: Name used as prefix of the other resources params Type: String Resources: LLIAMUser: Type: AWS::IAM::User Properties: UserName: !Ref LLFileSpaceName LLIAMUserKey: Type: AWS::IAM::AccessKey Properties: UserName: !Ref LLFileSpaceName DependsOn: LLIAMUser LLIAMPolicy: Type: AWS::IAM::Policy Properties: PolicyName: !Join ['-',[!Ref LLFileSpaceName ,'Policy' ]] PolicyDocument: Version: "2012-10-17" Statement: - Effect: Allow Resource: - !Join ['',['arn:aws:s3:::',!Ref LLFileSpaceName,'/*']] - !Join ['',['arn:aws:s3:::',!Ref LLFileSpaceName]] Action: ["s3:PutObject","s3:GetObject","s3:ListBucket","s3:DeleteObject"] Users: [!Ref LLIAMUser] DependsOn: LLIAMUser LLS3Bucket: Type: AWS::S3::Bucket Properties: BucketName: !Ref LLFileSpaceName Outputs: LLIAMUserId: Value: !Ref LLIAMUserKey LLIAMUserSecret: Description: IAM secret created by IAM Access Key Element Value: !GetAtt LLIAMUserKey.SecretAccessKey |

Note: depending on your AWS environment you could assign several permission in LLIAMPolicy resource. Check official documentation here. Launch cloudformation using CAPABILITY_NAMED_IAM in the capabilities switch:

|

1 |

aws cloudformation update-stack --template-body file://deploy.yaml --stack-name LuicidLink --capabilities CAPABILITY_NAMED_IAM |

Finally, after grabbing “accessid” and “secret” (user interface or CLI) I’m able to activate AWS S3 bucket.

Wrap up

So, performances are really high and the choice of the HTTPs protocol and the stream separation between metadata and data synchronization gains an interesting balance between performance and efficiency especially for remote office where the bandwidth and capacity saving are still mission-critical.

They are still developing some important features to improve security and usability and the energy of this company is great. My suggestion: if you have an S3 bucket on-premise or in the cloud just give a try (13 days of evaluation full features).

Disclaimer: I have been invited to Cloud Field Day 6 by Gestalt IT who paid for travel, hotel, meals and transportation. I did not receive any compensation for this event and No one obligate me to write any content for this blog or other publications. The contents of these blog posts represent my personal opinions about technologies presented during this event.

[…] Cloud Field Day 6: LucidLink […]